Forget chatbots. AI agents in e-commerce now reason, remember, and act autonomously. Here are 7 use cases reshaping how brands sell, support, and retain customers

I've been reviewing a lot of "AI in e-commerce" content lately, and most of it makes the same mistake: it treats AI agents like fancy chatbots with better UX.

They're not. And if you're building for e-commerce clients, or shipping your own store, that misunderstanding will cost you.

This post is a ground-level breakdown of the 7 AI agent patterns that are actually moving metrics in 2026, how they work technically, and what separates the implementations that win from the ones that get ripped out after 90 days.

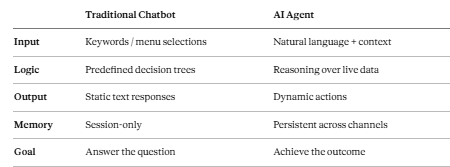

Before We Start: What Actually Makes Something an "AI Agent"?

The term is getting blurred fast. Here's the mental model I use:

The key difference is agency. A chatbot waits for the right input and pattern-matches to a response. An agent interprets intent, pulls from live data sources (OMS, CRM, carrier APIs, policy docs), decides what action serves the goal, and executes it.

That distinction matters more than it sounds. It changes your entire architecture.

1. The Autonomous Shopping Concierge

Most shoppers don't arrive at a storefront knowing exactly what they want. They arrive with context:

"I'm hosting a dinner party Saturday." "I need something that works in a humid climate." "My kid just started swimming lessons."

Traditional e-commerce forces them to translate that context into filters and keywords. It's friction they didn't ask for, and most of them just leave.

A shopping concierge agent removes that translation layer entirely.

How it works technically:

- Natural language input → intent extraction via LLM

- Intent mapped to product catalog via vector search or structured query

- Agent recommends complete bundles or collections, not isolated SKUs

- Reasoning chain considers price, availability, ratings, and user history

The output feels like talking to a knowledgeable sales associate, not typing into a search bar. Shorter time-to-decision, higher average order value, lower bounce. The data consistently backs this up.

One implementation detail worth noting: the quality of your product catalog embeddings matters enormously here. A well-structured catalog with rich descriptions will outperform a generic LLM every time.

If you are prototyping this kind of conversational flow, Voiceflow is worth looking at. It is a developer-first agent builder that handles dialog management and multi-step reasoning well, and it has a reasonable API for connecting to custom product catalogs. Not the only option, but one that avoids rebuilding dialog state management from scratch.

2. Size, Fit, and Compatibility Guardians

Returns are one of e-commerce's most expensive problems, and most of them are completely avoidable. They happen because shoppers hope something will work instead of knowing it will.

AI agents solve this by acting as a confidence layer between the shopper and the product.

In fashion: the agent pulls previous purchase sizes, brand-specific sizing charts, and stored measurements to give a personalized recommendation rather than a generic "see size guide" link. In electronics: the agent checks compatibility in real time from structured product specs. Router and modem. Lens and camera body. Charger and laptop. These are exactly the queries where customers currently give up or guess wrong.

In furniture: the agent factors in room dimensions, existing pieces, and style preferences before recommending anything.

The outcome is confidence before checkout, which directly reduces post-purchase regret. Brands implementing this well are seeing return rate drops of 20-35%. That's not a chatbot win. That's an architecture win.

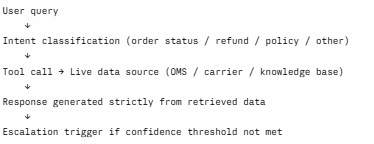

3. Hallucination-Free L1 Support Automation

Here's a failure mode I've seen repeatedly: teams deploy an LLM-based support agent, it confidently gives a wrong answer about a refund timeline or a shipping policy, the customer screenshot it, and now you have a trust problem that takes months to recover from.

In 2026, accuracy is not a feature. It is the baseline requirement. The fix is not a smarter model. It is grounding. Every response generated by the agent must be sourced from verified, live data: order management systems, carrier APIs, return policy documents, ERP data. The model's job is to format and communicate that data, not to invent it.

The architecture that works:

With this pattern, agents can autonomously resolve 60-80% of L1 queries with consistent accuracy, while escalating anything that requires judgment or empathy to a human.

The psychological payoff is underrated: customers don't just want fast answers. They want right answers. Consistency builds trust faster than speed.

For teams that want this without building the RAG pipeline from scratch, platforms like YourGPT let you ground agents directly in your own knowledge base, order data, and policies with native e-commerce integrations. Worth evaluating if you're on a tight build timeline.

4. Continuous Conversations That Persist Across Channels

One of the fastest ways to destroy customer trust is to make someone repeat themselves.

"As I mentioned on chat yesterday..." "I already explained this to someone on email..."

Every time a customer has to re-explain their situation, you've told them their time doesn't matter to you. That's a retention problem, not a UX problem.

AI agents with persistent memory prevent this entirely. Whether a conversation starts on live chat and continues on WhatsApp, or arrives via email three days later, the agent retains:

- Prior conversation context

- Unresolved issues

- Stated preferences

- Emotional tone history

**Implementation patterns that hold up in production: ** Short-term: session state stored in a vector DB or structured memory store Long-term: customer profile enriched incrementally after each interaction Cross-channel: unified customer ID linking conversation threads across platforms

The business result is a sense of being recognized, which behavioral research consistently identifies as one of the strongest drivers of brand loyalty. It is also one of the hardest things to fake. Agents that do it well have a compounding retention advantage.

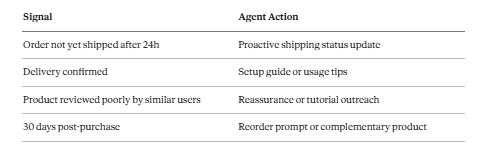

5. Proactive Post-Purchase Retention

Most e-commerce brands disappear the moment checkout completes, which is exactly the wrong time to go quiet. Post-purchase is when buyer anxiety peaks.

"Did my order actually go through?" "Is this going to arrive before Friday?" "Did I make the right choice?"

Agents can monitor post-purchase signals and intervene before doubt becomes regret:

These are not upsell plays. They are trust plays. The brands doing this well are not seeing it as a revenue tactic; they are seeing it as the cost of not losing a customer they already paid to acquire. The repeat purchase impact is significant and measurable. One-time buyers who receive a single well-timed post-purchase touchpoint convert to repeat at roughly 2x the baseline rate.

6. Real-Time Merchandising Intelligence from Conversations

This one is underimplemented and underappreciated. Every conversation an agent has is a structured data point. Every question, hesitation, or objection is a signal. Most brands are throwing this away.

When you aggregate agent conversations at scale, patterns emerge fast:

Repeated questions about a sizing gap in a specific product line High hesitation rates on a particular price point Consistent confusion about a product feature that the listing doesn't explain well Frequent "do you carry X in Y" queries for variants you do not stock

That is direct, real-time feedback from your actual customers, surfaced without a survey, without a focus group, without waiting for a quarterly review.

The agents that capture and route this data to merchandising and product teams are creating a feedback loop that competitors without agents simply cannot replicate. The information asymmetry compounds over time.

7. Intelligent Human-in-the-Loop Escalation

The best AI agents know when not to act alone. This is a design principle, not a fallback.

When emotional intensity rises (a frustrated customer, a complex dispute, a potential fraud case), the agent should escalate, but it should never escalate blindly. A cold handoff where the human agent has to start from scratch is just as bad as no escalation at all.

The right pattern is a warm escalation: the agent hands off a complete summary, sentiment analysis, relevant order data, and suggested resolution options. The human steps in with full context and can focus entirely on judgment and empathy.

Signals worth triggering escalation on:

- Negative sentiment score above a defined threshold

- Query type classified as high-stakes (fraud, legal, health, safety)

- Repeated failed resolution attempts within the same session

- User explicitly requests a human

This is where the human-plus-AI model genuinely outperforms either alone. AI handles volume and consistency. Humans handle the cases that require reading between the lines. The split only works if the handoff is clean. In practice, this handoff layer is where a lot of custom builds fall apart because it gets treated as an afterthought. Tools like Gorgias are worth studying here, not necessarily as a drop-in solution, but because their approach to AI-to-human ticket routing in e-commerce support is well thought out. The way they structure context passing between the automated layer and the human queue is a useful reference pattern regardless of what you are building on.

The Stack Underneath All of This

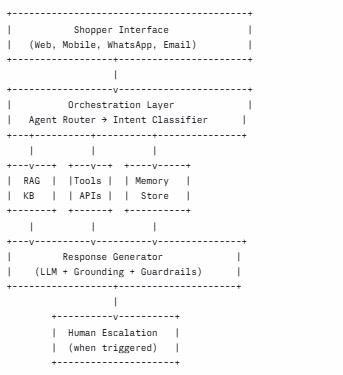

If you are building any of these patterns, here is the reference architecture worth starting from:

**A few notes from real implementations: **

The intent classifier is where most teams under-invest. A weak classifier routes queries to the wrong tool and creates the hallucination problems described in #3.

The memory store design determines whether cross-channel persistence (#4) works in practice. Build this with the customer ID as the primary key from day one, not as an afterthought.

Guardrails are not optional. Define what the agent is not allowed to do (make price promises, override policy, discuss competitors) before you deploy.

**Where to Actually Start **If I were starting a new e-commerce AI agent build today, this is the order I would do it in:

L1 support automation first. It is the fastest win, the most measurable, and the least risky. Ground it in real data before anything else. Build memory and escalation in parallel. These are infrastructure decisions that are painful to retrofit. Add the shopping concierge layer once support is stable. Conversion tooling built on a shaky foundation will create more problems than it solves. Instrument every conversation from day one. The merchandising intelligence in #6 only works if you are collecting structured data from the start. Post-purchase automation last. It is high value, but it depends on everything else working first.

The gap between brands that are operationalizing AI agents now and those still running rule-based chatbots is widening every quarter. The infrastructure is genuinely ready. The question is whether you build it well or build it fast, because in most cases you only get one shot before the business loses patience.

If you have shipped an AI agent for e-commerce, I would genuinely like to hear what broke first. Drop it in the comments.